Reporting modernization

Problem

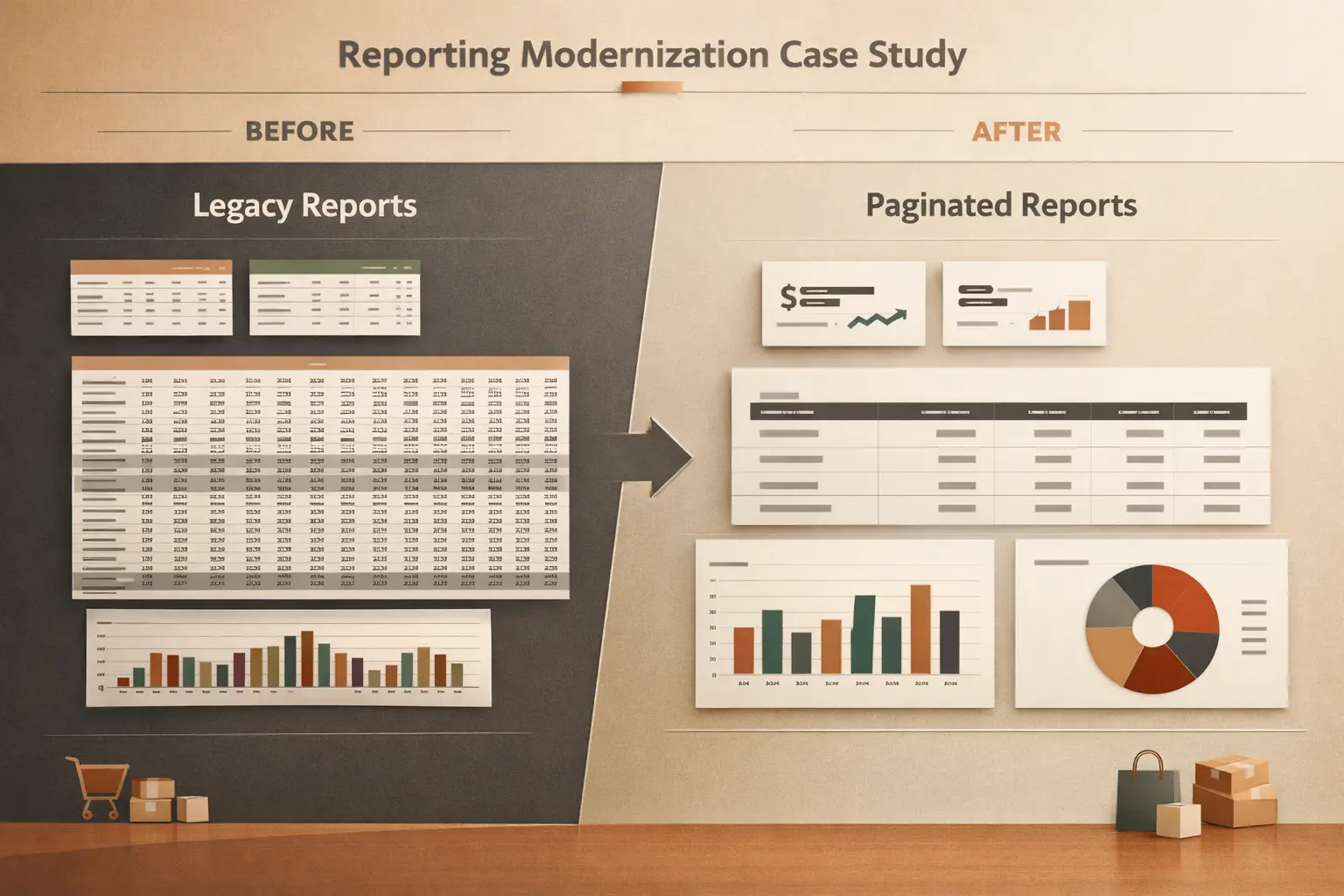

Legacy reports are fragile, hard to maintain, or built on platforms the team is moving away from. Stakeholders work around the reports instead of relying on them. Migration backlogs grow because no one has time to untangle the old logic and rebuild it properly.

How I help

- Cognos-to-Power BI migration with structure cleanup, not just visual replication

- Operational and management reporting in Power BI and Paginated Reports

- Report architecture decisions: what to rebuild, what to retire, what to consolidate

Outcome

Reports that are structured for maintainability, not just delivery. Stakeholders use the reporting directly instead of exporting to spreadsheets. The modernization backlog shrinks because each report is rebuilt with a clearer model and fewer dependencies.

Good fit when

- A platform migration is planned or stalled and reports are in scope

- Existing reports are too fragile or complex for the current team to maintain confidently

- Stakeholders are asking for new reporting but the foundation is not ready

Engagement shape

- Duration

- 4 to 12 weeks

- Team fit

- Solo BI lead or a 2 to 5 person BI / analytics team

- Investment

- Scoped per engagement

Not a fit when

The underlying data platform itself is being rebuilt. Modernize reporting on stable foundations, not during a platform migration.